From having your ORM randomly lose data to getting $50,000 in BTC stolen, is a range of documented issues related to faulty understanding of DB isolation levels and how they behave under concurrency. And that’s stupid, because, if you took 10mins to read this article, you’d be well equipped to actually understand how your system will behave, eventually saving your reputation and some money along the way.

Background

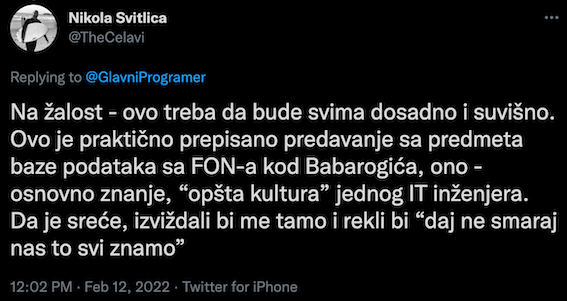

I’d like to open this blog post with a tweet from Nikola Svitlica (@TheCelavi):

It’s in Serbian, but I’ll happily translate the essence of it. It says: “Sadly, this (referring to transaction isolation levels) should be a topic that is EXTREMELY BORING to everyone. This is elementary Database knowledge. A base; software engineering 101. If I were lucky enough, people would kick me out of the room for talking about something as widely known as Isolation Levels”. And yet, …

Honestly? I’m also guilty as charged. And even shameful to admit it. Because, if you were to ask me to rate my general DB knowledge, I’d probably say – 4/5. Strong 4.

Why not 5? Well, I guess I lack ‘some’ details on inner workings and fine-tunings of DBs and their storage engines. But yeah, definitely a 4.

Thing is that I’ve been using DBs for 14 years. Mostly relational DBs, but I had my fair share of fun with NoSQL space and some key-value storages. Well hell, not only was I using DBs for so long, but I’d argue that I’m so good with them that I could discuss ANY architectural detail. You know – 14 years of experience means that I’ve learned something, right?

Wrong!

Not only did it take me 14 years to learn how much I didn’t know, but it took a SECOND RE-READ of Designing Data-intensive applications to figure out that I was left completely ignorant on the importance of “I” in ACID (hint: “I” stands for Isolation).

I’ll start by discussing what ACID is, but if you’re already familiar, feel free to jump to Isolation levels.

What is ACID?

It’s like SOLID. Or YAGNI. Or KISS.

It’s one of those easy to remember acronyms that are supposed to mean something and help keep discussions short. I mean, you’ll agree that “I think this goes against SOLID principles” transfers the message way faster than, say, “I think that this is not good because if you keep it like this, every time somebody wants to change your code, they will have to modify and recompile the source code itself, which is not really good as they will have to update tests as well, and on top of that, having your object do multiple things at once is generally not a good practice because people tend to overuse it and this makes your code tightly coupled which is not really good because if you were to try to refactor it in future, you’d have problems as you’d have to update many places, ….”.

It’s the same reason why we have a whole book on Design Patterns. It enrichens your vocabulary so that you can communicate more efficiently.

So, what is ACID? ACID stands for Atomicity Consistency Isolation and Durability. It represents the set of guarantees that your DB Transactions provides to you. And let me tell you right there — those are some big ass guarantees! It’s just the fact that we are so used to them that we completely forget that somebody wrote a code that provides it for us!

Atomicity

Atomicity says that, no matter what happens, unless you explicitly COMMIT your changes, whatever you do during the transaction will be wiped off. It’s pretty much ALL or NOTHING guarantee.

Just in case you don’t appreciate the Atomicity enough, let me give you an example. An extreme, but possible one. Imagine you were running a bank transaction that involves transferring money from your account to your car dealer. And you want to transfer $50,000. That involves, in it’s simplest form, at least three steps:

- Check if there is enough cash on your account

- Remove $50,000 from your account (e.g. UPDATE accounts SET amount = amount – 50000 WHERE client_id = 10)

- Add $50,000 to car dealers account (e.g. UPDATE accounts SET amount = amount + 50000 WHERE client_id = 50)

Now, imagine that after step #2 is done, your DB went down (e.g. some dumb-ass plugged a broken lamp into a power socket and the whole data center went down). You see the problem, right? $50,000 would have vanished into thin air. They were deducted from your account, but just before being “transferred”, the power went out and they were never transferred to your car dealers account. Poof! And now you’re in a damn pickle (and 50k lighter)!

Luckily, you don’t really worry about it because most of DBs provide that nice warm cushion. You know that this can’t happen because … well, because it’d never happen, right? DBs should and do prevent that!

That’s Atomicity in action. A transaction guarantee that says that either ALL or NOTHING will happen. All WRITE commands that you provide from the moment you start a transaction, till the moment you commit it, will be treated as a SINGLE unit. They will be ATOMIC. And that’s one damn great thing right there!

Consistency

I’ll quote the Designing data-intensive applications book here: “Atomicity, Isolation and Durability” are properties that Database guarantees, whereas “Consistency” is an application property.

In general, in terms of ACID, Consistency means that your database helps you ensure certain statements that always have to be true (usually called – invariants).

Here’s a real-life example – law of conservation of energy. This law pretty much states that in any isolated system, amount of energy ALWAYS remains the same, and can only be transformed from one form to another; unless it gets added from the outside. If you were to model that, an invariant would be that amount of energy has to stay the same.

Here’s an example. Think of a ball standing firmly on the ground. Or even better, think of it standing on the ground in a very isolated room. You don’t really need to be a physics major to know that, no matter what, that ball won’t move by itself. Unless you add external energy to it (e.g. an earthquake happens or somebody goes in and kicks it), it will remain firmly on the ground forever. It’s energy level will remain constant.

Another example would be a statement that 1 + 1 will always be 2, no matter what. If you have one marble, and you add another marble next to it, you will always have TWO marbles. Unless you use a hammer to break one into two, but then you added external energy source, so that doesn’t count.

What I specified above are INVARIANTS. Statements that are ALWAYS true, no matter what (i.e. no matter how hard you try, 1 + 1 will always be true and that ball will never move by itself). Well, they at least seem to be true unless we go into quantum physics which seem to claim that things COULD happen out of nowhere (e.g. another marble could pop out of nowhere), but I’m no expert in that area so I’ll stick to regular physics rules.

Even though databases claim that they provide Consistency, this seems to be a somehow vague statement. They DO provide you mechanisms to guarantee consistency (e.g. foreign keys, crash recovery, etc.), but at the end of the day, your application has to be the one dictating those rules. So, as I said above – this is more of an application property than DB property really.

Also, do keep in mind that Consistency seems to be used in a lot of contexts, and most of those have NOTHING to do with ACID and Transaction Properties (e.g. Eventual Consistency). So, be aware of potential confusion as people making these acronyms are fallible as well.

Durability

The last property that your DB guarantees is that whatever you actually send to it, will be written to a durable storage. Well, assuming that you get a confirmation back that, that is.

One might be asking – why’d you want a storage that doesn’t store your data forever? Well, I could give you bunch of reasons but some could be – caching, temporary search data, etc. Anything that you can rebuild in case you lose it is totally cool.

Hence, durability is all about providing a guarantee that if you send a WRITE request (e.g. INSERT or UPDATE), and if DB replies with YES, I CONFIRM I HAVE STORED THIS, your data will be safely stored to some permanent storage medium (e.g. an SSD disk). That’s a ‘D’ in ACID.

What is important to keep in mind is that perfect durability simply DOES NOT exist. Your DB guarantees that your data has been written down to a permanent medium, but it can’t guarantee that it will STAY THERE. For example, if you are writing to a single SSD disk, that disk could simply die. Or what if it burns down in a fire? No known DB fail-safe mechanism can convert back from dust to digital data.

Hence, durability only applies under the condition that you take additional measures of keeping your storage safe (e.g. by keeping multiple replicas in multiple physically separate data centers).

Let’s talk about Isolation (the “I” in ACID) now

Assuming you’ve read all above, you’ve come a long way. Now’s the time to dive deeper into a hugely under-estimated topic – the Isolation levels.

For starters, let’s explain what Isolation is.

Isolation deals with concurrency, or – what happens if two transactions try reading and/or modifying the same object at the same time.

I’ll give you an example. Let’s imagine you have an “accounts” table which stores your bank account number and amount of money (in USD) that you have on your account. Furthermore, we’ll assume that you have $1,000 on your account.

Next, let’s assume that you want to make two transactions – one in amount of $600 and another in amount of $500. If we execute these transactions one by one, your second transaction would be rejected because you don’t have enough money. This is what is usually call “serial” execution.

However, what happens if we try executing both transactions at EXACTLY THE SAME TIME (a situation usually referred to as “race condition”)? You don’t need master’s degree to understand that in both cases you’d check if you have enough money, and since you do have (because, remember – you are executing them at the EXACT SAME MOMENT) – both $600 and $500 would be deducted from your account. Great condition for yourself, but awful for a bank that ends up with -100 dollars.

Now, it’d all be easy if there was just one way of handling the above situation. Obviously, acquiring an exclusive lock (i.e. allowing only a single transaction to modify the data at the same time) is great and definitely prevents the concurrency issues, but it comes with a cost – it blocks everything else and makes everything much slower. And yeah, if you are wondering – this isolation level is called SERIALIZABLE and is one of the strongest isolation levels you could be asking for.

Luckily, there are other isolation levels that provide a bit weaker guarantees, with just-enough safety. The trick is knowing pros & cons of each so that you can make the right choice between performance and isolation. We’ll discuss all of them, but for the sake of reference, here’s a list of all levels:

- READ UNCOMMITTED (weakest & most efficient)

- READ COMMITTED

- REPEATABLE READ

- SERIALIZABLE (strongest & least efficient)

Let’s dive deeper into them now.

READ UNCOMMITTED Isolation Level

Frankly speaking, READ UNCOMMITTED is pretty much what you’d expect if you didn’t have Isolation guarantee at all. It’s pretty much free for all – every transaction sees whatever the other transaction did, and there is no isolation whatsoever.

Even though it sounds useless, you could surely come up with tons of scenarios where you don’t really care if you see uncommitted data from other transactions. One potential example could be refreshing your inbox. Say, if you had an icon that shows number of unread messages you have, by reading it from some cache, and having another table that stores actual unread emails, it couldn’t hurt that much if you saw that you have ZERO new emails and yet you see a new one in the list (scenario where one transaction started updating the actual emails table, but hasn’t updated the cached table yet).

For cases where you care way more about performance than about isolation and accuracy – READ UNCOMMITTED would be a way to go.

READ COMMITTED Isolation level

In contrast to the previous one, READ COMMITTED is probably closest to what most people will ever need. It also happens to be a default isolation level in PostgreSQL and Oracle.

It provides two guarantees:

- When reading from DB, you will only see data that was COMMITTED by other transactions (i.e. no “dirty reads”)

- When writing to DB, you can only overwrite data that was successfully committed by other transactions (i.e. no “dirty writes)

I’ll try to give you an example. Imagine we have two transactions, A and B and they are both reading / modifying the same row X:

- Initial value of row X = 5

- Transaction A: BEGIN TRANSACTION

- Transaction B: BEGIN TRANSACTION

- Transaction A: GET X ==> 5 (transaction A sees that X is 5)

- Transaction B: GET X ==> 5 (transaction B sees that X is 5)

- Transaction A: SET X = 10

- Transaction A: GET X ==> 10 (transaction A sees the modified value)

- Transaction B: GET X ==> 5 (transaction B does NOT see the uncommitted value from other transaction!)

- Transaction A: COMMIT

- Transaction B: GET X ==> 10 (transaction B now sees the new value because it was committed by other transaction)

Frankly speaking, for most of the use-cases, these guarantees are good enough as you are guaranteed never to see unwritten stuff from not-yet-finished transactions and you are sure that no matter what, you will never overwrite data from other running transaction.

If Transaction A were to roll back it’s changes, B would never even notice that value was changed.

Now, you might be wondering – what exactly is the problem with this model, right? Well, for most of the time – nothing. At least as long as you are aware that, in case that Transaction B were to modify value X at any point, whichever transaction finished first, it’s value would end up in DB – a condition called “Lost Write” which is a topic on it’s own.

Another issue with this Transaction Isolation level is the “inconsistent reads”. Because, if you observe closely, during the transaction B, value of X has changed (initially it was 5, but then it became 10). For most of use-cases this is fine, but there are some specific cases (e.g. creating DB backup or executing long-running analytical queries) which could cause problems if value of variable changes during the transaction execution.

REPEATABLE READ Isolation Level

As mentioned above, READ COMMITTED is great for most of use-cases. However, an anomaly that could happen is that within a same transaction, you might see different values of a field that you haven’t changed. Most of the time, this is absolutely fine, but sometimes you really want to prevent this behavior and always see a consistent snapshot of your database.

Here’s a same example from above:

- Initial value of row X = 5

- Transaction A: BEGIN TRANSACTION

- Transaction B: BEGIN TRANSACTION

- Transaction A: GET X ==> 5 (transaction A sees that X is 5)

- Transaction B: GET X ==> 5 (transaction B sees that X is 5)

- Transaction A: SET X = 10

- Transaction A: GET X ==> 10 (transaction A sees the modified value)

- Transaction B: GET X ==> 5 (transaction B does NOT see the uncommitted value from other transaction!)

- Transaction A: COMMIT

- Transaction B: GET X ==> 10 (transaction B now sees the new value and this is called a “read skew”)

Again, reasonable question could be — what’s the problem with this? I’m seeing the ACTUAL state of the DB at the time when I’m querying for data again (step #10). Well, one potential problem would be a DB backup that takes hours to generate. During that time, values could keep changing, and if you were to use READ COMMITTED, you’d end up with varying values in your backup (e.g. some values might be OLD and some might be NEW). Furthermore, if you were to RECREATE your DB from this backup, you’d end up with mixed states (some OLD and some NEW values) which effectively means you are pretty much screwed 🙂

So, what’s the solution for this problem? The solution is rather “simple” – always use the same data snapshot during the transaction execution (i.e. no matter what happens, the transaction will always see the EXACT same data on every repeated read). Obviously, this isolation level is called REPEATABLE READ because, in step #10, you’d see value of X being 5 (i.e. the same as it was on the beginning of transaction). FYI, this isolation level is sometimes referred to as “Snapshot Isolation”.

SERIALIZABLE Isolation Level

Serializable Isolation Level is the heaviest artillery you could use. It’s slow and bulky but pretty much protects you from anything.

While all previously mentioned levels allowed some form of concurrency (and efficiency), SERIALIZABLE leve pretty much makes your transactions execute as if there was no concurrency at all (i.e. it is guaranteed that the results would be the same as if they executed serially – one by one).

You might be wondering – what exactly makes Serializable different than REPEATABLE READ? And you’d be right – at first glimpse, it doesn’t make ANY difference, as both provide pretty much the same guarantees.

The difference is that, in Repeatable Read mode, there exists a read anomaly called “Phantom Read”, which is something that Serializable Isolation level prevents.

I’ll explain. Suppose we have two transactions, A and B, that both start at the same time:

- Transaction A: BEGIN TRANSACTION

- Transaction B: BEGIN TRANSACTION

- Transaction A: SELECT * FROM foo ==> (5 rows returned as a result)

- Transaction B: SELECT * FROM foo ==> (5 rows returned as a result)

- Transaction A: INSERT (bar) INTO foo

- Transaction A: COMMIT

- Transaction A: SELECT * FROM foo ==> (6 rows returned as a result)

- Transaction B: SELECT * FROM foo ==> (5 rows returned as a result)

If we were using REPEATABLE READ, in step 8, we’d see SIX rows, which is different from when we executed a first query in step 4. However, with Serializable level – we see the data exactly as if we first executed Transaction A, and then proceeded to execute Transaction B.

As you could imagine, this comes with quite some costs, as you have to take care of quite some locks and put others on hold, which is not really ideal if we are aiming at efficiency.

Why not always use strongest Isolation Level?

As I said above – the problem with using strongest isolation level is that, 9 out of 10 times – you don’t really need it and it just takes extreme performance toll.

For most of the use-cases, READ COMMITTED is fine enough, which is the exact reason why it’s the default level in most of DBs.

However, in order to be capable of arguing what you really need (sometimes READ UNCOMMITTED could be OK as well), you pretty much need to learn the difference between each one of them.

What about NoSQL databases?

This really depends on the vendor and the DB we are talking about. My understanding is that some (e.g. Redis) have pretty solid support, whereas others (e.g. MongoDB) introduced them just recently.

Hence, my suggestion is to always read up about concurrency handling in your DB of choice.

In Summary

Congrats on lasting ’till the end. It was a massive read and yet, I’d argue, a valuable one. We keep taking DBs and transactions for granted, which is great because they seem to come a long way to abstract all the nitty gritty details from us. And yet, calling yourself a professional without having a “basic” knowledge is not the way to go.

Now you’re armed with general knowledge and differences between each isolation level. If you want to read more, you can find some useful resources down below.

Have fun!

Useful Resources

If you want to explore the topic a bit more, here are some resources that I’ve found usefl:

- Designing data intensive apps book by Martin Kleppmann — an absolute encyclopedia of designing (distributed) systems. I kept referring to it throughout the article and if you haven’t read it yet, now’s the time to do so. But keep in mind – you need to read it SLOW. It has TONS of information and digesting it requires time.

- An Atomic Rant blog post by Nate Wiger — old but gold. It goes in depth of how your ORM could be losing your data without you ever figuring out WTF is going on!

- How $50.000 in BTC was lost due to race-condition and shitty wallet implementation another older article but really demonstrates the true issue that could happen if you don’t really understand your DB isolation levels

- A story of guy who stole 100 BTC (and returned them back) yet another article about how a person used race-condition to exploit system and steal ~ 100 BTC.

nice article mixa!

Thanks, Lacko!!!